Human Performance and Cognition Ontology

Contents

[hide]Introduction

Background knowledge bases are well known to be valuable resources in scientific disciplines. They not only serve the purpose of standalone references of the basic knowledge pertaining to a discipline, but also assist in tasks such as information extraction, literature search and exploration, etc. Because the construction of such knowledge bases requires timely and significant human expert involvement, it is becoming increasing important to explore computational techniques to automate it to keep pace with the large amount and the dynamic nature of new information. Although manually built ontologies are of high quality (or precision), their automatic counterparts that offer high recall also suffice as backbones for focused browsing and knowledge discovery. More recently semi-automatic approaches that combine human efforts with automatic tools have gained prominence. This is because organization and up-to-date maintenance of the knowledge bases to facilitate investigative research can only be accomplished by a suitable accompaniment of effective tools that match the needs of the researchers. The human performance and cognition ontology (HPCO) project aims to full these two major objectives

- Build a knowledge base using semi-automatic domain hierarchy construction and relationship extraction from PubMed citations;

- Build a tool to browse and explore scientific literature with the help of the knowledge base created in 1.

The project involves extending our work in focused knowledge (entity-relationship) extraction from scientific literature, automatic taxonomy extraction from selected community authored content (eg Wikipedia), and semi-automatic ontology development with limited expert guidance. These are combined to create a framework that will allow domain experts and computer scientists to semi-automatically create knowledge bases through an iterative process. The final goal is to provide superior (both in quality and speed) search and retrieval over scientific literature for life scientists that will enable them to elicit valuable information in the area of human performance and cognition. The project is funded by the human effectiveness directorate of the air force research lab (AFRL) at the Wright-Patterson air force base.

Project Team

PI: Amit Sheth

other associated faculty: T. K. Prasad

Students: Christopher Thomas, Wenbo Wang,

Delroy Cameron

Postdocs: Ramakanth Kavuluru

Phase 1 Results and Future Work Presentation

The following slides are presented at the AFRL when discussing final results of Phase 1 of this project.

Project Architecture and Components

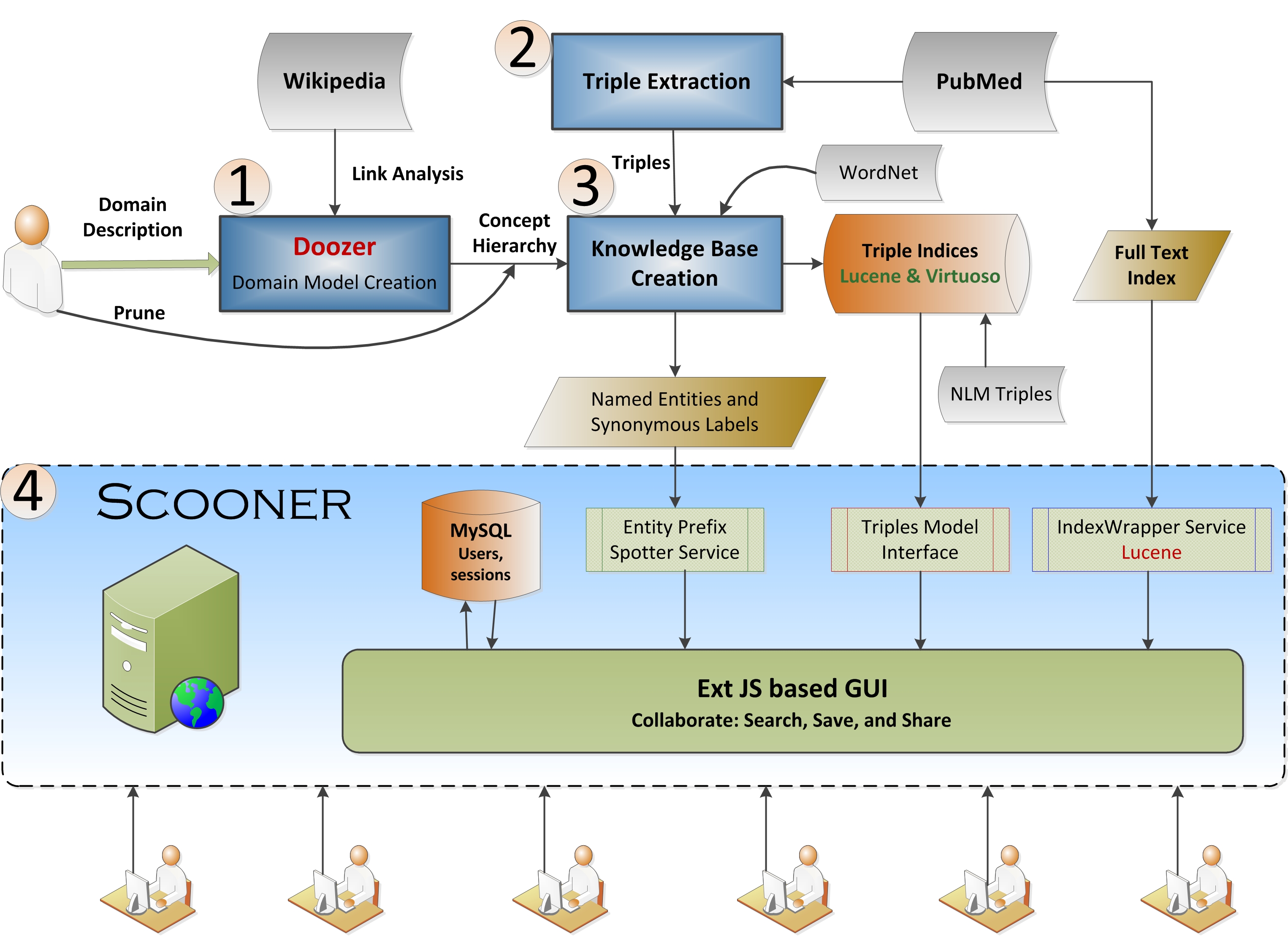

The two broad objectives mentioned in the introduction are accomplished using the following steps:

- An initial hierarchy of concepts in the area of human performance and cognition is built by using our prior work on domain model extraction through Wikipedia . The model is approved by the experts at AFRL and forms the basis of the ontology. This component provides the focus for the ontology and its usage.

- Natural language processing (NLP) based entity and relationship extraction is performed on PubMed abstracts to facilitate enhanced information extraction. Due to the complex nature of the entities and and inherent variations in the writing styles of authors of PubMed articles, this component is continually evolving although the initial set of results we have are already promising. Relationships between concepts in the hierarchy formed in component 1 are also found through pattern based approaches. The results in this component complement those obtained using NLP based techniques.

- Relationships (triples) extracted from NLP and pattern based techniques in step 2 are mapped onto the hierarchy built in step 1. A different set of triples that are extracted from the Biomedical knowledge Repository (BKR) from the NLM are also incorporated as a separate dataset.

- While the first three components are the foundation, the search and query component is the one the users interact with and hence much current focus is on this component. Efficient searching and browsing of the semantic trails facilitated by results from components 2 and 3 is provided.

The following picture shows an overall architecture of the project with each of the above individual components explained in detail after it.

Domain Hierarchy Creation

The Wikipedia corpus contains a vast category graph on top of its articles. We build the domain hierarchy by using an "expand and reduce" paradigm (Doozer [2]) that allows us to first explore and exploit the concept space before reducing the concepts that were initially deemed interesting to those that are closest to the actual domain of interest. The extracted hierarchy describes the HPC domain as specified by the AFRL scientists with a keyword-based domain description. Later the model was pruned manually to remove ambiguous categories which, although relevant, are not found essential to the domain. Please see Doozer's wiki for further details.

Triple Extraction

Extracting triples from free text in the biomedical domains has been long standing challenge in information extraction research. Identifying compound multi-word biomedical entities is one of the challenges owing to non-standard usages that are obvious to human readers but not clear to computer mediated analysis. This is further compounded by using pronouns and the necessary co-reference resolution. The second challenge is to extract meaningful triples from sentences in natural language that relate the identified compound entities. There are two important approaches in this area. The problems mentioned above are mitigated if the one decides on a fixed set of pre-determined entities (with the associated synonyms from a standard vocabulary) to function as subjects and objects, and an interesting fixed number of predicates pertaining to the domain. We used this approach to connect the entities extracted (leaf nodes) as part of the domain hierarchy extracted in (1). We used lexical pattern based fact extraction [4] by using facts on linked open data (LoD) to bootstrap interesting patterns and their frequencies for a fixed set of relationship types. We then used the normalized pattern frequency vectors to `compare’ with the observed vectors for pairs of entities in the domain hierarchy to determine the most probably relationship. We obtained a precision of 79% with this approach. However, we note that, this approach is restrictive in the sense that both the relation types (predicates) we want to extract and the entities we want to connect should be known to us. A similar approach used by the NLM uses rules that express certain types of relationships in natural language to extract triples from PubMed abstracts. They use the UMLS Semantic network and the Metathesaurus to obtain the fixed set of predicates and entities (and their synonymous labels). We have incorporated a subset of these triples, from the Biomedical Knowledge Repository (BKR), in the HPC knowledge base. A contrasting extraction approach, the so called open information extraction, uses NLP-based heuristics (and sometimes background knowledge) to extract triples. Here both the entities and predicates do not come from a fixed pre-determined set; they rather emerge based on the heuristics used. However, these can later be filtered to curate a subset that is interesting to a domain of interest. We used heuristics based on long range dependencies, among different terms in sentences, that are obtained from the dependency trees output by the Stanford parser for each sentence in all abstracts distributed by PubMed.

Knowledge Base Creation

The triples extracted in (2) are anchored to the domain hierarchy created in (1). This is done by mapping only those triples (NLP-based/BKR) whose subject and object labels match at least one concept in the domain hierarchy. This way only those triples that are `related to’ concepts in the HPC domain are included in knowledge base. Note that since the original open extraction using NLP techniques do not consider plurality (for entities and predicates) and tense variants, we used Wordnet to normalize predicates and entity labels. However, predicates that are expressed in passive voice are retained as separate from those in the active voice to differentiate between the switch between the subject-object roles of the entities involved. For example, predicates indicated by verbs used in active voice such as ‘contribute’, ‘contributing’, ‘contributed’, are all normalized to ‘contributes’ while those used in passive voice such as ‘was contributed’ are handled differently. The knowledge base represented is captured as an OWL file. The resulting OWL file does not semantically satisfy all the requirements of an ontology as several named entities which would be considered synonymous by experts are treated as different instances in the ontology. We, however, have synonyms based on anchor texts and redirect labels present in Wikipedia for concepts in the HPC domain hierarchy. For example, the Wikipedia page for ‘prolactostatin’ redirects to ‘dopamine’, and hence, a relationship for prolactostatin is also considered to hold for dopamine. The domain hierarchy is not a strict taxonomy owing to Wikipedia category hierarchy not being a strict `part of’ or `instance of’ taxonomy. However, for the application component of the project, this is shown to be sufficient. To compare perceived relative latencies, we have serialized the knowledge base in Lucene and also used started using Virtuoso triple store for the BKR triples.

Scooner: Knowledge-Based Search and Browsing Application

A literature search and browsing application, Scooner (version 1), based on the knowledge base was developed to explore PubMed abstracts [3]. The 4th component of the architecture diagram above shows the implementational aspects of Scooner including the technologies used. While the full functionality of the conventional search has been incorporated, this has been further enhanced with additional browsable links created using knowledge base entities that are spotted in the retrieved search results, via their relationships, to facilitate navigation. This provides substantial improvement over the search provided by PubMed: In Scooner, users can navigate between abstracts via the relationships among concepts mentioned in the abstracts, thus providing webpage-like navigation over raw text abstracts that are devoid of hyperlinks (and hence were unconnected hitherto). In other words, this is a concrete realization of what we have termed the Relationship Web [1]. Moreover, using a search based on the triple component labels, abstracts retrieved when browsing via relationships are very likely to be those from which the relationships were extracted. As such, Scooner lets users verify relationships and understand them in the relevant surrounding context. Users can also create new meaningful trails by combining individual triples. The workbench in Scooner facilitates a central aggregation of important abstracts imported for further review. The work bench can be filtered to only show only those abstracts that pertain to a selected set of triples or trails. Additionally, collaborative features were incorporated using which users can create persistent search projects, write comments on abstracts they find relevant, and share the (sub) projects with other users on a public dashboard. The Scooner application has been tested by a group of 5 researchers at the AFRL who reported satisfaction with its current functionality and provided critical suggestions to provide finer control over projects shared on the dashboard. The revised version that addresses these suggestions is being deployed in AFRL/RHPB and is currently in use. For a screencast of Scooner in use, please visit: http://knoesis.wright.edu/library/demos/scooner-demo/

Publications

- Amit Sheth and Cartic Ramakrishnan, Relationship Web: Blazing Semantic Trails between Web Resources, IEEE Internet Computing, July-August 2007, pp. 84-88.

- Christopher Thomas, Pankaj Mehra, Roger Brooks and Amit Sheth. Growing Fields of Interest -Using an Expand and Reduce Strategy for Domain Model Extraction. In Proceedings of the 2008 IEEE/WIC/ACM International Conference on Web Intelligence and Intelligent Agent Technology, Sydney, 2008, pp. 496-502.

- D. Cameron, P. N. Mendes, A. P. Sheth, V. Chan, Semantics-Empowered Text Exploration for Knowledge Discovery, 48th ACM Southeast Conference, ACMSE2010, Oxford Mississippi, April 15-17, 2010.

- Christopher J. Thomas, Pankaj Mehra, Ramakanth Kavuluru, Amit P. Sheth, Wenbo Wang, and Gerhard Weikum. Automatic Domain Model Creation using Linked Open Data and Pattern-Based Information Extraction, Kno.e.sis Center Technical Report, 2010.